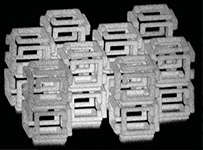

Optical processors, built with “optical neurons,” can perform large-scale high-dimensional linear operations at the speed of light. With the current advances in micro-fabrication, such optical processors can now be 3D-fabricated, but at limited precision, eventually leading to a model mismatch due to quantized optical weights. To address this issue, we propose a quantization-aware training framework. Our approach accounts for physical constraints during the training process, leading to robust designs. We numerically demonstrate that our approach can design state-of-the-art optical processors using diffractive networks for multiple tasks despite quantized learnable parameters. We thus lay the foundation upon which improved optical processors may be 3D-fabricated in the future.